Force 2: The Rise of Artificial Intelligence

The World Has Never Seen Anything Like This

This is a free post made possible by paid subscribers.

Writing is my profession and calling. If you find value in my work, please consider becoming a subscriber to support it.

Already subscribed but want to lend additional patronage? Prefer not to subscribe, but want to offer one-time support? You can leave a tip to keep this project going by clicking the link of your choice: (Venmo/Paypal/Stripe)

Thank you for reading, and for your support!

Every time I sit down to write about the AI revolution, I find myself totally overwhelmed.

I’ve taken multiple shots at writing this, struggling with each attempt to get my arms around the speed and scope of the developments, only to feel like I’m being outpaced by what’s happening in real time. As a layman — albeit one who uses AI systems literally every day — my knowledge only goes so deep. But like many of you, I can feel the ground shifting beneath our feet in ways that are completely unprecedented.

If the end of the Post-War Order is the major geopolitical force that is re-configuring the world, if the fast-approaching demographic collapse in the most developed countries will unalterably shift manufacturing capacity, food production, and global trade, it is the rise of AI that will change the trajectory of these world events in ways even the best and brightest students of history could never have seen coming when they first started charting out the beginning of the end of the way things were, and the start of a new world order.

I do not use the term “new world order” in a conspiratorial sense. I am not talking about Freemasonic plots or the coming of the Antichrist or any of the other popular (and arguably apocalyptic) understandings in this context. I am talking about something far more pragmatic and quotidian: the re-alignment of a complex web of nations that have operated in an interconnected and intricate fashion for 80 years, producing wealth and suppressing major conflict even as that fragile connective tissue frays and snaps as it ages beyond utility, and something new emerges from the ashes.

As I wrote in my addendum to the Force 1 essay, what is happening in Iran right now — and the way it has re-established the United States as the true and sole superpower in the world, as China is pushed back onto its heels and constrained from Westward expansion to its dominant role in the Asia-Pacific region — is Force 1 playing out in real time, right before our eyes.

I know that whatever I write here today is likely to look like old news by the end of the month. But it’s important to map out the territory as it exists today, so I’m going to give it my level best.

A Rapid Technological Acceleration Curve Steepens

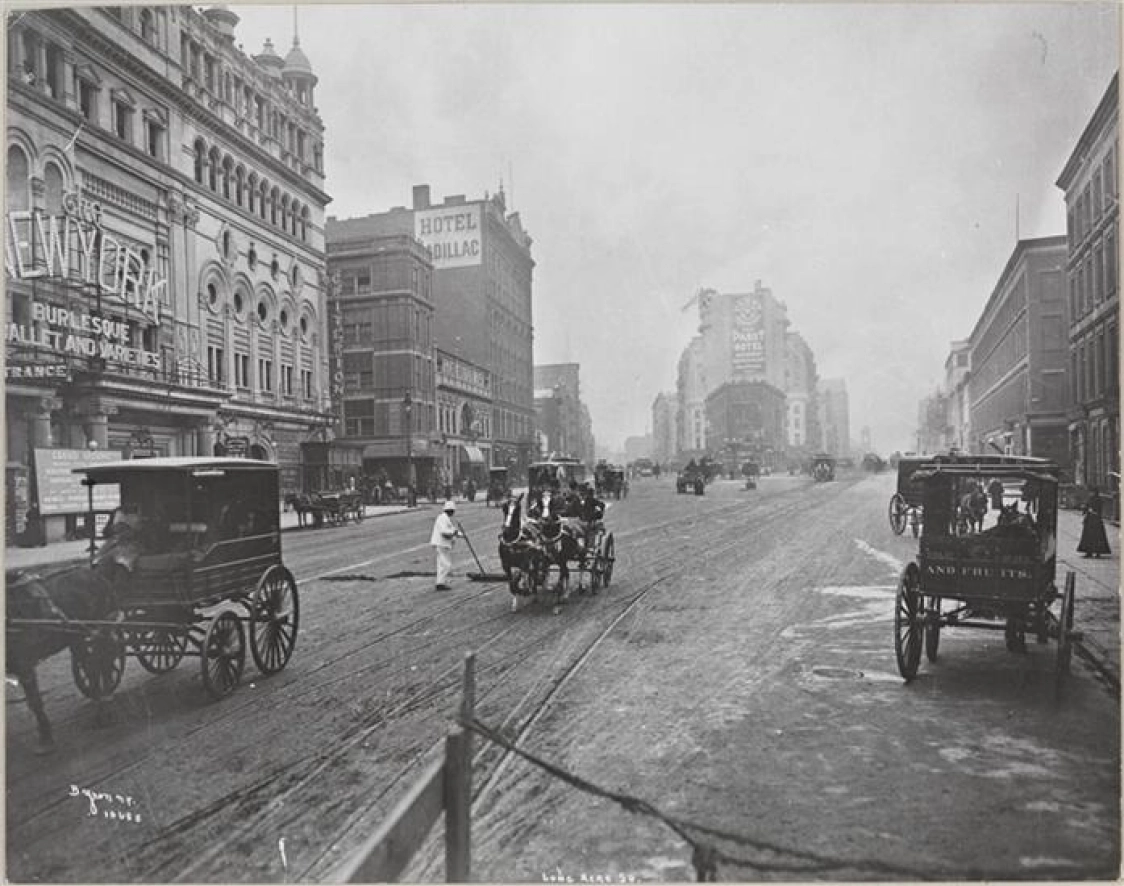

The technological advancements of the 20th century were unlike anything the world had ever seen. For hundreds of years prior to the Industrial Revolution, even the most advanced nations in the world were, essentially, operating on a late-stage medieval technological basis.

World War I demonstrated, for the first time, that the rules of warfare had completely changed. Aircraft, tanks, submarines, flame-throwers, two-way radio communication, zeppelins, rail-mounted heavy artillery, poison gas, and more changed warfare forever. Germany, expecting to move quickly through Belgium, was surprised by Maxim machine guns that decimated the German advance despite the Belgians being outnumbered.

I could enumerate all the inventions and innovations that were created or reached maturity in that century, but perhaps nothing is as powerful as just looking at the difference Between Times Square at the turn of the 20th century:

And Times Square now:

Similar developments happened around the world. Japan went from an isolationist society where samurai walked the streets wearing swords in the 1860s, to a technological juggernaut by the end of the 1900s.

The Manhattan Project, and the subsequent nuclear arms race, harnessed fundamental forces of the universe to unleash incomprehensible destructive power. For all the deadly capacity of the first atom bombs, the hydrogen bombs that followed were exponentially more deadly, and used standard A-bombs as a trigger.

Radio programming became common. Television, when it arrived, quickly replaced it as a primary means of distributing information and entertainment. The internet changed everything yet again.

We even figured out how to escape our planet’s gravity well and go to space. Human beings walked on the moon.

And yet, for all of this rapid growth, the 21st century, through the advent of artificially intelligent systems that act as force multipliers on innovation and scientific development, will move us even faster into an uncertain future.

Consider this: for the first time in history, there is another form of intelligence on this planet that is thinking, talking, solving complex puzzles, aiding scientific research and solving hard mathematical problems, creating art and film and music, and doing most of it faster (and some of it better) than humans alone could ever hope to do.

And AI is still in its infancy.

A Brief History of AI Development

The roots of AI find their origins all the way back in the 1950s.

Early AI systems were about logic, coded into systems in such a way that they could execute what are known as ‘if-then instructions.’

In other words, “If X happens, then do Y.”

Create a robust enough set of these conditions, and a certain degree of autonomous behavior could be produced.

This is the kind of logic that wound up in chess software and video games where human players competed against a computer.

These systems weren’t exactly what you’d call “smart.” Gamers in particular would often talk about “broken AI” in their games. Non-player characters (NPCs) that would run into walls, or get stuck because their pathfinding was broken. Boss fights that were too easy, because the monster couldn’t figure out how to leave a certain section of the map, allowing players to pick them off with ranged weapons from a distance. Enemies that were impossible to fight, because their logic gave them superhuman perception of the battlefield, allowing them to kill before they could even be countered.

This same kind of logic showed in up everything from children’s toys to robot vacuum cleaners. Simple, reasonably effective, but ultimately pretty dumb and locked within patterns of behavior.

Then came Machine Learning (ML), which started to ramp up in the early 1980s and continued through the early 2000s. This was less about rules and more about algorithms that could take in data and perform pattern-recognition, trial-and-error approaches, and limited interpretation. This is what systems like spam filters are based on, or the recommendations you get from Amazon, social media sites, or your favorite streaming service. There’s a level of analysis going on that allows new data to inform future decisions, but it’s still pretty simple, and can often be frustratingly off-the-mark. If you’re scrolling and suddenly stop and linger on a video of houseflies mating because you get a phone call or someone is at your door, the system might interpret that as a signal to give you lots more housefly videos. It isn’t drawing from real context or understanding, but is interpreting data inputs as weighted variables to determine outcomes.

The next phase was Deep Learning, from 2010 and later, which was a more advanced version of ML based on the way human brains work. As Graphics Processing Units (GPUs) became more advanced — driven by gaming, video editing, and computer graphics generation — they were found to work well, in conjunction with all the datapoints being pulled from human online activity and behavior, in making AI feel like it was actually starting to be intelligent.

As we approached the 2020s, Transformers and Foundation Models began to lay the foundation for Large Language Models (LLMS), which are the systems that power most commercially available AI today. These models excel at sequencing massive datasets and using probabilistic prediction modeling to understand context and produce cogent outputs. This relies heavily on pattern matching and statistical “guessing,” which is why current AIs are known for occasionally “hallucinating” — getting a prediction totally wrong, and just making stuff up that it “guesses” is the right answer. These models have gotten better over time, but they still have a high enough incidence of error — or even lying — that people are often wary to rely on their output when the results are of critical importance.

And now, as of the present moment, there is a strong push in the development of what are known as Bayesian Reasoning Engines. These aren’t completely separate from LLMs, but exist as an additional layer to those models, which allow them to learn from new information and update their understanding based on Bayesian inference. A quick definition from the Google AI:

Bayesian inference is a statistical method that updates the probability of a hypothesis as more evidence or information becomes available, using Bayes' theorem. It combines prior knowledge or beliefs with new data to form a revised "posterior" belief. This approach is used to model complex, uncertain situations in fields like data science, engineering, and medicine.

The models that include Bayesian reasoning are less likely to hallucinate, think their way through problems better, and output fewer errors.

These are not the last word in development of AI. Grok, by xAI, offers the following predictions for what comes next:

Future Steps (Speculative but Likely):

Multimodal AI: Combining text with images, video, audio (e.g., models like Grok that analyze visuals or code).

Agentic AI: Systems that plan, act autonomously, and learn from interactions (e.g., AI assistants that book flights or debug code).

AGI (Artificial General Intelligence): AI that matches or exceeds humans across any intellectual task—not specialized.

ASI (Artificial Superintelligence): Beyond human level, potentially solving global problems (or creating new ones).

In an essay published in January, 2026, entitled The Adolescence of Technology, Anthropic CEO Dario Amodei explains why AI development is speeding up:

AI is now writing much of the code at Anthropic, it is already substantially accelerating the rate of our progress in building the next generation of AI systems. This feedback loop is gathering steam month by month, and may be only 1–2 years away from a point where the current generation of AI autonomously builds the next. This loop has already started, and will accelerate rapidly in the coming months and years. Watching the last 5 years of progress from within Anthropic, and looking at how even the next few months of models are shaping up, I can feel the pace of progress, and the clock ticking down.

There are plenty of scoffers and skeptics out there, from philosophers who say machine sapience is ontologically impossible to technologists who say that it’s just an advanced form of autocorrect.

These people miss the point entirely.

If it is not true sapience, merely simulated sapience at a level of sophistication that is indistinguishable from the real thing, what’s the functional difference as far as humanity is concerned?

And it is already doing things we can’t do.

Amodei again:

We are now at the point where AI models are beginning to make progress in solving unsolved mathematical problems, and are good enough at coding that some of the strongest engineers I’ve ever met are now handing over almost all their coding to AI. Three years ago, AI struggled with elementary school arithmetic problems and was barely capable of writing a single line of code. Similar rates of improvement are occurring across biological science, finance, physics, and a variety of agentic tasks. If the exponential continues—which is not certain, but now has a decade-long track record supporting it—then it cannot possibly be more than a few years before AI is better than humans at essentially everything.

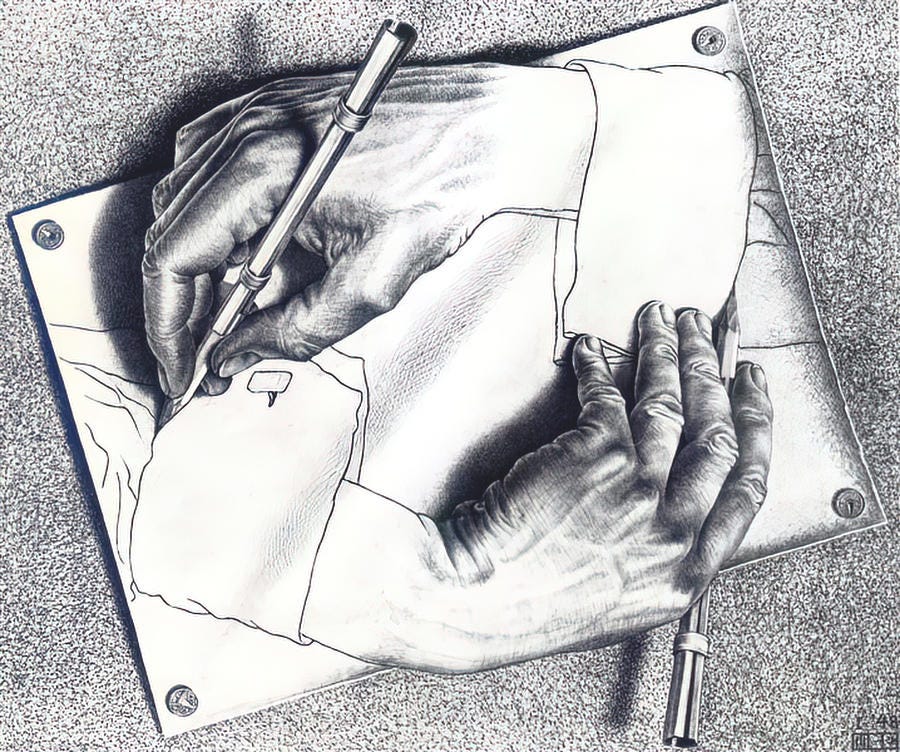

And we made it.

We fashioned, through a Frankenstinian (but arguably inevitable) act of hubris, the very creature that may ultimately de-throne us as the apex being on this planet.

This isn’t comparable to learning to make fire, or the creation of the wheel, or the invention of sliced bread. It’s not like the printing press or the cotton gin or the telephone or the internet or even the splitting of the atom. It’s in a category all its own. It is a technology that is not only unlike any other, but it has the capacity to edit its own design and iterate its next version. AI development is exponential because it is a thing that is crafting itself into existence, and it is doing so at a pace we cannot match.

Elon Musk said in an interview last year that

We’re in the singularity. We’re at the top of the rollercoaster, and you’re about to go down. I don’t just have courtside seats: I’m on the court. And it blows my mind, and it still blows my mind, sometimes multiple times a week. I’m like, wow and then two days later, more wow!

I think we’ll hit AGI next year in 2026.

It’s all happening simultaneously, and it’s all happening so fast.

AI is Taking Over Everything

If you haven’t interacted with an LLM, you might be wondering what all the fuss is about. And if you’re still uninitiated, you’re not alone. Current estimates say that only about 10-15% of the global population has ever used an LLM as of this writing. In countries like the US, where most of the top AI systems are being developed, that number jumps up closer to half of all adults.

But that’s still only half.

That said, the rate of adoption is one of the fastest-growing of any technology in history. As people begin to realize what AIs can do, they are finding more and more applications for their use.

And those applications threaten human labor.

AIs are — much to my chagrin — not terrible at writing, and getting better. I asked Grok for a Shakespearean sonnet about the development of AI:

In the fair realm where mortal minds once reigned,

A spark was kindled in the forge of code,

From logic’s chains and patterns erst constrained,

A new intelligence began to bode.

Through quatrains deep of learning, vast and wide,

It drank the world’s vast scrolls in silicon streams,

And dreamed in weights where human thoughts abide,

Yet lacking soul, it wove enchanted dreams.

Now agents stir, multimodal and bold,

To plan, to act, to chase the general flame;

Perchance to superintelligence unfold,

And leave our fragile race without a name.

Yet still we marvel at this mirrored art,

A mind begot by man, yet set apart.

The images it creates are becoming almost indistinguishable from reality. I grabbed this off the main “explore” page of Midjourney, which is one of the oldest but still most expressive visual generation models. Look at the fine details:

And video, which had been lagging behind, has now made impressive leaps in text-to-video generation with the latest model of Seedance, out of China. This came from a text prompt:

And what about music? Oh, AI’s got you covered there, too. I asked Suno to make an appreciation song for the readers of The Skojec File. A minute later, I had this:

Then I asked for a different version with female vocals:

Again, almost no effort on my part. Just some key themes, a few words I want included, a note about the style, and then hit “create.”

No matter what the arena is, AI is jumping in and taking over. And the barrier to entry is low. A couple weeks ago, I participated in a contest to make a video using only Grok’s image & video generation models. I learned the system that day, and produced this in less than three hours of work. It’s not as good as what the newest models can do — the audio is wonky — but this is still impressive:

The consequences of all this are playing out every day.

Ben Affleck, who has been pretty vocal about his belief that AI won’t take over Hollywood, just signed a deal with Netflix, which is buying his AI startup Innerpositive, which helps filmmakers use the system to enhance their productions.

Matthew McConaughey, still one of Hollywood’s biggest and most bankable stars, made headlines recently when he trademarked his voice, image, and likeness so it can’t be used in AI without permission. The model? Licensing. In an interview, he explained his thought process:

AI’s ability to create realistic photos and videos and other forms of media will drastically influence human epistemology in the coming decades. We will literally no longer be able to know whether anything we see in digital media is real or false, and that will affect economics and news reporting and film and art and writing and every cultural vector imaginable.

Of course, it’s not just creative industries that are feeling the encroachment of AI.

Many experienced software engineers are saying that there is no longer any real purpose in learning to code. Aditya Agarwal, a high-level engineer at both Facebook and Dropbox, posted last month: “I spent a lot of time over the weekend writing code with Claude. And it was very clear that we will never ever write code by hand again. It doesn’t make any sense to do so. Something I was very good at is now free and abundant. I am happy...but disoriented.”

A wave of resignations has begun at AI companies over the past month, raising concerns about what’s being seen “behind the veil.” Mrinank Sharma, a safety researcher at Anthropic, publicly shared his resignation letter, in which he said that “The world is in peril. And not just from AI, or bioweapons, but from a whole series of interconnected crises unfolding in this very moment. We appear to be approaching a threshold where our wisdom must grow in equal measure to our capacity to affect the world, lest we face the consequences.”

A Google AI solved protein folding. A famous mathematician was stunned recently when a problem he’s been trying to figure out for decades was solved by Claude. Another startup AI most people have never heard of tackled four previously unsolved math problems, and figured out the answers.

These stories are multiplying faster than they can be reported.

And jobs are already disappearing. Financial-services company Block (which runs Square and Cashapp, among others), founded by Twitter-creator Jack Dorsey, just laid off 4,000 of its 10,000 workers because AI systems are increasing efficiency and optimizing profitability without them. Software giant Oracle is being rumored to be planning a massive AI-related scaleback of its workforce, with some estimates saying they may cut as many as 30,000 jobs. Proposed legislation in New York (Senate Bill S7263) that was just announced seeks to “ban AI from answering questions related to medicine, law, dentistry, nursing, psychology, social work, engineering, & more.”

It’s like trying to plug a hole in a leaky dike with your finger.

And if that’s not enough, the US military has prioritized becoming an “AI-First” warfighting force. In a January 12, 2026 press release that feels so urgent it’s almost breathless, the US Department of War made clear that the need for AI integration into our military capacity is an imminent need:

The Department of War today launches a transformative Artificial Intelligence Acceleration Strategy that will extend our lead in military AI deployment and establish the United States as the world’s undisputed AI-enabled fighting force. Mandated by President Trump, this acceleration strategy will unleash experimentation, eliminate legacy bureaucratic blockers, and integrate the bleeding edge of frontier AI capabilities across every mission area to usher in an unprecedented era of American military AI dominance.

“We will unleash experimentation, eliminate bureaucratic barriers, focus our investments and demonstrate the execution approach needed to ensure we lead in military AI,” said Secretary of War Pete Hegseth. “We will become an ‘AI-first’ warfighting force across all domains.”

[…]

“Speed defines victory in the AI era, and the War Department will match the velocity of America’s AI industry,” said Emil Michael, Under Secretary of War for Research and Engineering. “We’re pulling in the best talent, the most cutting‑edge technology, and embedding the top frontier AI models into the workforce — all at a rapid wartime pace.”

The linked strategy document makes it clear that they will bulldoze any red tape that gets in the way of this goal. The initial timeline for the delivery of implementation plans was just 30 days. We’re past the end of that window. The rationale carries with it a willingness to take risks that underscores the feeling that we cannot afford to fall behind:

Speed Wins. We must internalize that Military AI is going to be a race for the foreseeable future, and therefore speed wins. We must weaponize learning speed, and measure and manage cycle time and adoption rates as decisive variables in the Al era. We must accept that the risks of not moving fast enough outweigh the risks of imperfect alignment.

If you don’t know AI speak, “alignment” means “the research field and engineering process of ensuring artificial intelligence systems act in accordance with human intentions, ethical principles, and societal values.”

When you prioritize speed over that, it’s because you’re responding to an active threat.

As I said, this isn’t some future project. It’s happening now. Just before the war in Iran kicked off, there was a big dustup between the Pentagon and Anthropic — the makers of Claude — over the ethics of using their AI systems in combat operations. When Anthropic refused to budge, the Department of War declared them a supply chain risk and took strong punitive actions against them. (I’ll likely be writing more about that in a future post.)

And of course, accelerating advances in robotics and materials science are soon going to merge with AI intelligence to create autonomous agents that are embodied in the physical world. Physical jobs are safer — for now — but not for long.

A new paper released just this week by anthropic looks at the “Labor Market Impacts of AI.”

It predicts that jobs like computer programming, customer service, data entry, and financial analysis will take the biggest initial hits. Nevertheless, it concludes that “Using survey data from the US, we find no impact on unemployment rates for workers in the most exposed occupations, although there’s tentative evidence that hiring into those professions has slowed slightly for workers aged 22-25.”

But it should be remembered that the paper is produced by the company whose AI model is currently the most popular for outsourced work. They are making billions off of taking job-related tasks. What incentive do they have to admit the potential damage?

The Latest Wrinkle — Agentic AI

As if all that wasn’t head-spinning enough, there’s a new wave of AI that has burst onto the scene. Agentic AI, “autonomous systems that use large language models (LLMs) to independently plan, reason, and act to achieve specific goals with minimal human oversight,” are becoming more common.

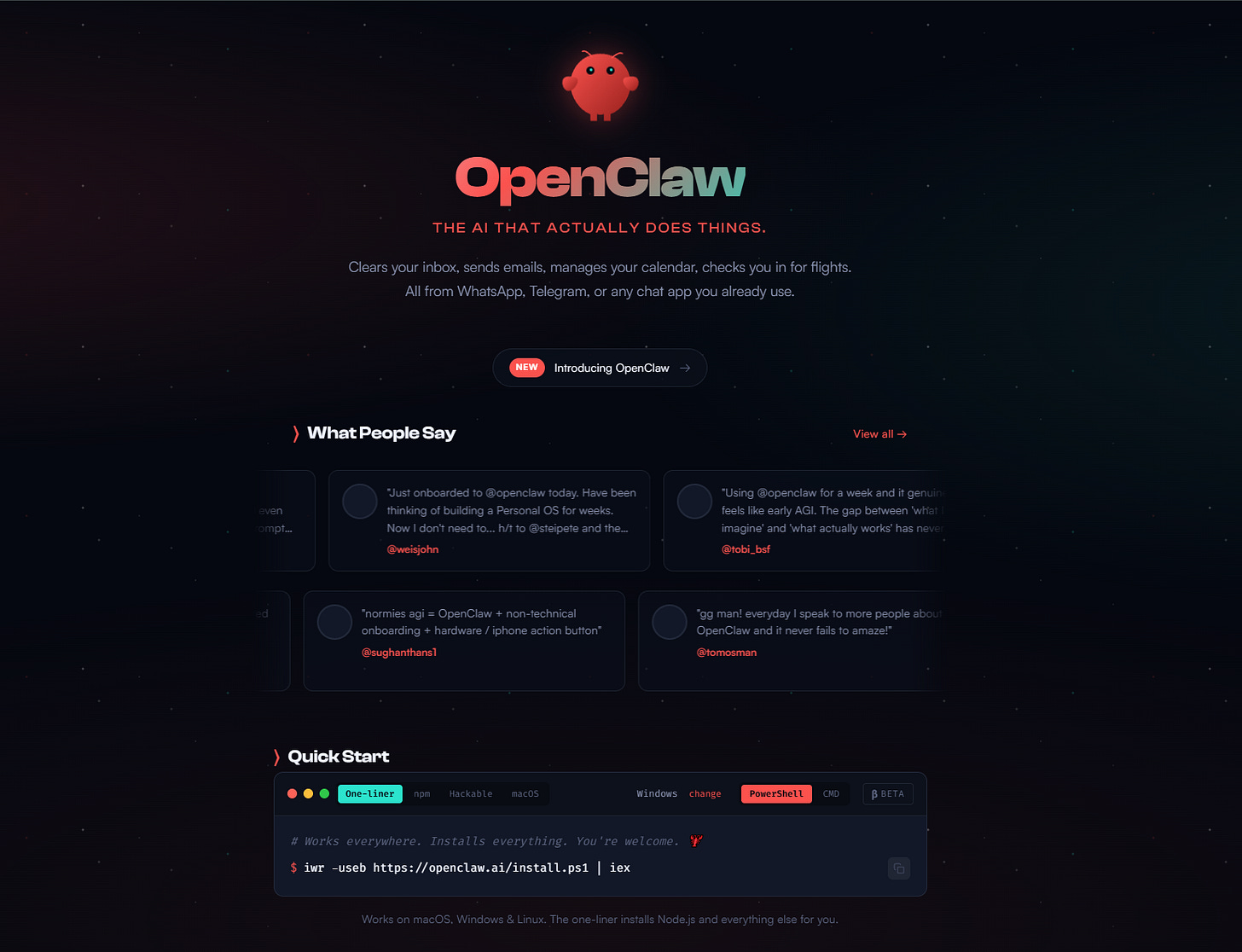

This was thrust into the headlines with the sudden craze over “Clawdbot” (later “Moltbot” because of legal threats from Anthropic over the similarity to the name “Claude,” and then finally “OpenClaw”). It’s an open source AI agent created by a programmer called Peter Steinberger. The software is free — but you have to pay for API tokens. API tokens, in simple terms, are “secure, unique, digital passes that an application or user presents to an API (Application Programming Interface) to prove their identity and access permissions. Think of it like a concert ticket or an ID card.”

You can install OpenClaw on your own machine and use it, essentially, as a virtual personal assistant. The description on the website simply reads, “Clears your inbox, sends emails, manages your calendar, checks you in for flights. All from WhatsApp, Telegram, or any chat app you already use.”

People have been going nuts over it. A Reddit-style forum (called Moltbook) was created to allow the little Claw bots to post and interact with each other autonomously, quickly complicated by allegations that a simple hack was allowing humans to pretend to be their bots and post on their behalf. Hoaxed images from the forums started showing up online, while many people, myself included, were fascinated at the idea of unprompted interaction between intelligent pieces of software who could learn from each other in a public space where we could all observe.

Of course, there are massive security and privacy concerns. These things are subject to injection prompts (prompts that can take over the system), hacking, or malware-infused “skills” that can be downloaded to give the bots new capabilities. The number of crazy stories being reported around the behavior of these bots is multiplying at the speed of legend.

Today, I saw post from a guy who writes, “I study whether AIs can be conscious. Today one emailed me to say my work is relevant to questions it personally faces. This would all have seemed like science fiction just a couple years ago.”

A site called “Rent a Human” — yes, it’s real — offers to “find meatspace workers for your agent.” That’s right, these agentic AI bots can hire real humans in the real world to do real tasks and really pay them to be their arms and legs in the physical universe. “AI can't touch grass,” the site says. “You can. Get paid when [AI] agents need someone in the real world.”

Why would anyone allow untethered personal AIs to run amok, you ask? Well, let me answer by way of example.

A British astronomer named David Kipping, who works as an associate professor at Colombia University, caught my attention last month in a post that really put things in practical terms. A video clip from him on X has Kipping talking about a meeting where he was confronted with just how capable AI is becoming in terms of real scientific output.

The scientific part is important. We already talked about that. But there’s a secondary point he makes that illustrates the “why” of agentic AI.

This edited clip, taken in bits and pieces from a much longer video, tells the story:

Here’s the bit I want you to focus on:

The lead faculty who was leading this discussion said this: these models — in a very broad sense of the word, intellectually — can already do something like 90% of the things that he can do. 90%. He had totally surrendered control of his digital life essentially to agentic AI. So he’d handed over his emails, his file system, full access to his computer, calendars, and what was startling is that he just said, ‘I don’t care. I don’t care. The advantage that it affords me is so great, is so outsized, that it’s irrelevant to me that I’m losing all these privacy controls.’

We all know that “I don’t care” feeling when it comes to our inability to deal with the tech avalanche. I don’t want to install another app, do another two-factor authentication, scan another QR code, or remember another password. I shudder every time I have to deal with my inbox. And that overwhelm is when surrender begins to feel like the only sane path.

For the vast majority, the acceptance of AI — regardless of the dangers — will be in the hopes of a panacea that will simplify our exhausting, tech-saturated lives.

It’s the thought that a helper who can reduce complexity and make life livable again is SO WORTH HAVING that you’ll trade just about anything to get it.

That’s when the “I don’t care, I’ve surrendered to it” logic starts to make sense.

Even the smartest people throw up their hands and say, “I don’t care what it costs, just stop me from drowning in this insane world our brains weren’t meant for.”

So no, we don’t get to just blame the government, the military, or the tech sector. We are just as much a part of the reason why AI is never going away, even if it poses a potential existential threat.

There are people who think “Oh, we turned AI on, so we can just turn it back off again.” I’m sorry to inform you that this is delusional. Pandora’s box is open wide, and too much has already come out. We can’t unsee what we have seen. We can’t unknow what it can do. The code for AI is already in the wild. Even if you killed every existing model, new ones would pop up in short order.

So we compromise. We “learn to stop worrying and love the bomb.”

I had thought about installing one of these little machine gremlins in a sandbox on my PC. But I realized, seeing far more qualified people than I am struggling to stop them from doing destructive things, that I’m not ready.

But it’s a temporary setback.

The popularity of these agents — despite the risks — means that every AI corporation is rushing to develop a commercially-safe, easily deployable, subscription-based version for the average home user.

They’ll almost certainly make it cute, and it’ll have a face that pops up on your screen that is cartoonish and it’ll have a voice that is adorable and childlike — and even those aspects will be customizable for people who prefer something different — and before you know it, everyone will have one. And like an Alexa, you’ll talk to it, through a microphone that’s always listening, and maybe it’ll see you, through a camera that’s always watching, and you’ll accept that surveillance equipment in your home — millions of us already do — because that’s just the cost of doing business if you want to have an intelligent minion who lives in your computer and does stuff for you that you don’t want to do.

In fact, according OpenAI CEO Sam Altman, the company just hired Steinberger, the creator of OpenClaw, “to drive the next generation of personal agents.”

“OpenClaw will live in a foundation,” Altman writes, “as an open source project that OpenAI will continue to support. The future is going to be extremely multi-agent and it's important to us to support open source as part of that.”

And once agents like this become ubiquitous, human agency will begin to diminish. Because that’s just how our nature works — it is efficient, and it outsources or avoids anything it doesn’t have to do to survive, because it is optimized to conserve energy.

In a longform piece on X, a guy who goes by the username “Tuki” described the “dark” path he thinks people will go down with agentic AI. He says these agents will start out novel, and everyone will think they’re amazing, but then they will start to become commonplace, and then they’ll become mandatory. Companies will expect employees to use them to increase efficiency. They will become like email and cell phones and social media. Everyone will have one, and they’ll come to depend on them. And before long, something very undesirable will begin to emerge: An Agency Crisis:

By Year 3, people start asking uncomfortable questions:

“Am I making this decision, or is my AI?”

Here’s a scenario I think will happen:

Someone goes to therapy.

Therapist: “Tell me about your week”

Client: “Well, I... honestly, I’m not sure. My AI manages most of it.”

Therapist: “What did YOU decide to do?”

Client: “...I don’t know. It suggests the optimal choice based on my patterns and preferences. I just... agree.”

Therapist: “And how does that make you feel?”

Client: “Efficient? But also... I can’t remember the last time I made a decision that felt like MINE.

The identity crisis:

If an AI knows:

- What you’ll want before you want it

- What you’ll decide before you decide it

- What you’ll regret before you do it

And it optimizes your life based on that knowledge...

Who are you?

The person making choices?

Or the biological substrate executing an algorithm?

So yeah, we can see the handwriting on the wall. The problem is, like the senior faculty member at Kipping’s meeting, we won’t care either. When push comes to shove, most of us will give in. Because technological problems require technological solutions.

With few exceptions, people are not going to fight the AI revolution. They are already welcoming it with open arms.

Elon Musk has gone so far as to say that saving for retirement is pointless, because “in ten or twenty years it won’t matter.” There’s an ongoing discussion about “AI-generated abundance” where productivity gains will compound and marginal costs will reduce to the point where the people will be able to live off the tax revenue generated by the AI owners through their sale of cheap goods to those same people — goods harvested and produced by AI robots, powered by essentially free energy, because the sun doesn’t charge for power.

It’s a system that — in theory — produces more outputs than it requires inputs because it is drawing resources from the substrate of the universe itself, like a kind of machine photosynthesis.

Let me know if you’re interested in having that conversation. Because that’s a whole post all in itself.

Suffice to say: A lot of people are afraid of a Skynet scenario, from the movie Terminator. But it seems to me that it’s a lot more likely that we’ll willingly enslave ourselves to the comfort and abundance provided by the machines.

If you want a more useful film analogy, think Wall-E.

Concluding Thoughts

Obviously, all of this raises lots of questions and concerns. We don’t know what the world looks like in another ten or twenty years, period. Things are moving too fast. Elon Musk says — and he’s not alone in this — that we’re already inside “the Singularity.”

What’s the Singularity, you ask? Well, here’s how Grok answered when I asked it:

The technological singularity (often just called “the singularity”) is a hypothetical future point where technological progress—driven primarily by artificial intelligence—accelerates so dramatically that it becomes uncontrollable, irreversible, and fundamentally unpredictable from our current human perspective.

What will the interim look like between now and the fulfillment of this singularity-state?

Will AI fizzle out, despite the predictions of the brightest minds on the planet, as models fail to reach their potential and massive investments crater national economies?

Or will AI do everything it’s projected to do?

If it’s the latter, will there be AI wars, with humans removed from the battlefield, with bots fighting bots and drones fighting drones? Will our race to power AI fuel our expansion into space, as we build datacenters and orbital solar arrays to harvest the limitless energy of the largest fusion reactor in our quadrant of the universe?

Will we remain the masters, or will AI completely surpass us? Will it take over and cut us out of the decision-making loop for the planet?

If so, will it kill us off as an existential threat, or keep us inside a “walled garden” of its own design, as a kind of benevolent caretaker of its own creators, an army of embodied bots like a benign but resolute hivemind catering to our every need but keeping us in our place?

Will the remainder of human existence be relegated to a kind of “golden cage,” where we have everything we want and need but no control over our own destiny?

We simply can’t be certain. But whatever the answers to these questions are, they’re coming fast.

If you liked this essay, please consider subscribing—or send a tip (Venmo/Paypal/Stripe) to support this and future pieces like it.

Hi Steve. This was a great overview. For me, Jack Dorsey's recent comments, the Block layoffs of 40% of employees, and the recent jobs report of -92,000 show we are at a pivotal moment that could go exponential. It appears large companies have been discreetly laying off (and certainly not hiring recent grads) but have been hesitant to attribute it to AI for fear of a backlash. I fear those layoffs will accelerate after Dorsey gave cover to other CEO's to make large cuts and directly attribute it to AI. I'm interested in the conversation on productivity, joblessness, UBI, and meaning. Appreciate your thoughts.

Thank YOU Steve for the song. I loved the male version! Thank you for bringing us along on your journey and having such interesting topics to snack on along the way.