The Era of "Pics Or It Didn't Happen" Is Dead and Buried

When everything looks real, nothing can be assumed to be

This is a free post made possible by paid subscribers.

Writing is my profession and calling. If you find value in my work, please consider becoming a subscriber to support it.

Already subscribed but want to lend additional patronage? Prefer not to subscribe, but want to offer one-time support? You can leave a tip to keep this project going by clicking the link of your choice: (Venmo/Paypal/Stripe)

Thank you for reading, and for your support!

A pair of images of an incredibly cool-looking frog appears in my timeline, under an account I follow but know nothing about.

The caption says it purports to be a Galaxy frog, with the name of a photographer, but no links to the source.

I click the Grok button at the top of the post, and the agent appears in the sidebar, doing a brief analysis of the post.

“Is it real?” I ask.

Grok misunderstands me, and begins explaining that the species is, in fact, real:

Yes, the Galaxy Frog (Melanobatrachus indicus) is a real species of frog native to the wet evergreen forests of India’s Western Ghats. It’s a rare, endangered amphibian in the Microhylidae family, known for its starry black skin with iridescent blue spots and orange underbelly…

The explanation goes on. I re-phrase the question to make clear that I’m asking if the photos of the frog are real. Grok rattles off a reply:

Yes, the photos in that X post (credited to Hadlee Renjith) appear to be real photographs of the actual Galaxy Frog (Melanobatrachus indicus), not manipulated, photoshopped, or AI-generated.The species is well-documented by scientists, conservation organizations (like the Zoological Society of London and IUCN-affiliated reports), and citizen science platforms such as iNaturalist, where verified observations include photos showing the exact same distinctive features: jet-black skin with iridescent blue/white starry spots on top and bright orange/reddish underparts…

Satisfied, I retweet the post, with the caption, “That is the coolest-looking frog I’ve ever seen. And yes, it appears to be real.”

Curious, I feed image details into Grok Imagine and Midjourney to see what they can produce. It takes a little tweaking, but I get something close enough that if I saw it in my timeline, I wouldn’t be sure.

Grok version:

Midjourney version:

For a very long time on the internet, there was an oft-repeated axiomatic rule for determining the authenticity of content: “Pics or it didn’t happen.”

But “pics or it didn’t happen” is dead. Buried. Pushing up daisies. Bloomin’ deceased.

I talked about this on Episode 3 of the MTS Podcast with Kale Zelden:

Videos show up in my feed from AI producers all the time. Many, like the following piece of brilliant satire about our AI obsession from HeavyPulp, are very obviously machine generated:

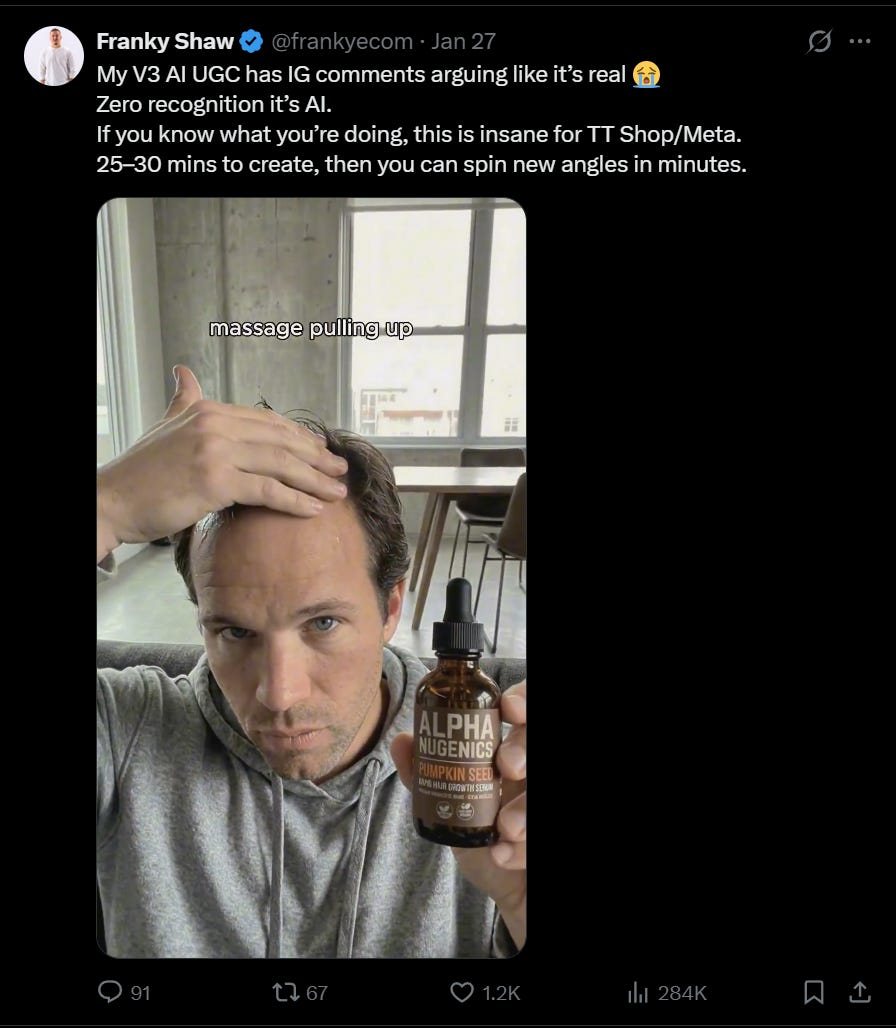

But there’s an increasing amount of “is it real or not” content coming up, and it’s getting harder and harder to tell. For example, this UGC (User Generated Content) social media ad for pumpkinseed oil, of all things, for hair regrowth:

How do I know it’s fake? Because the guy who made it said so:

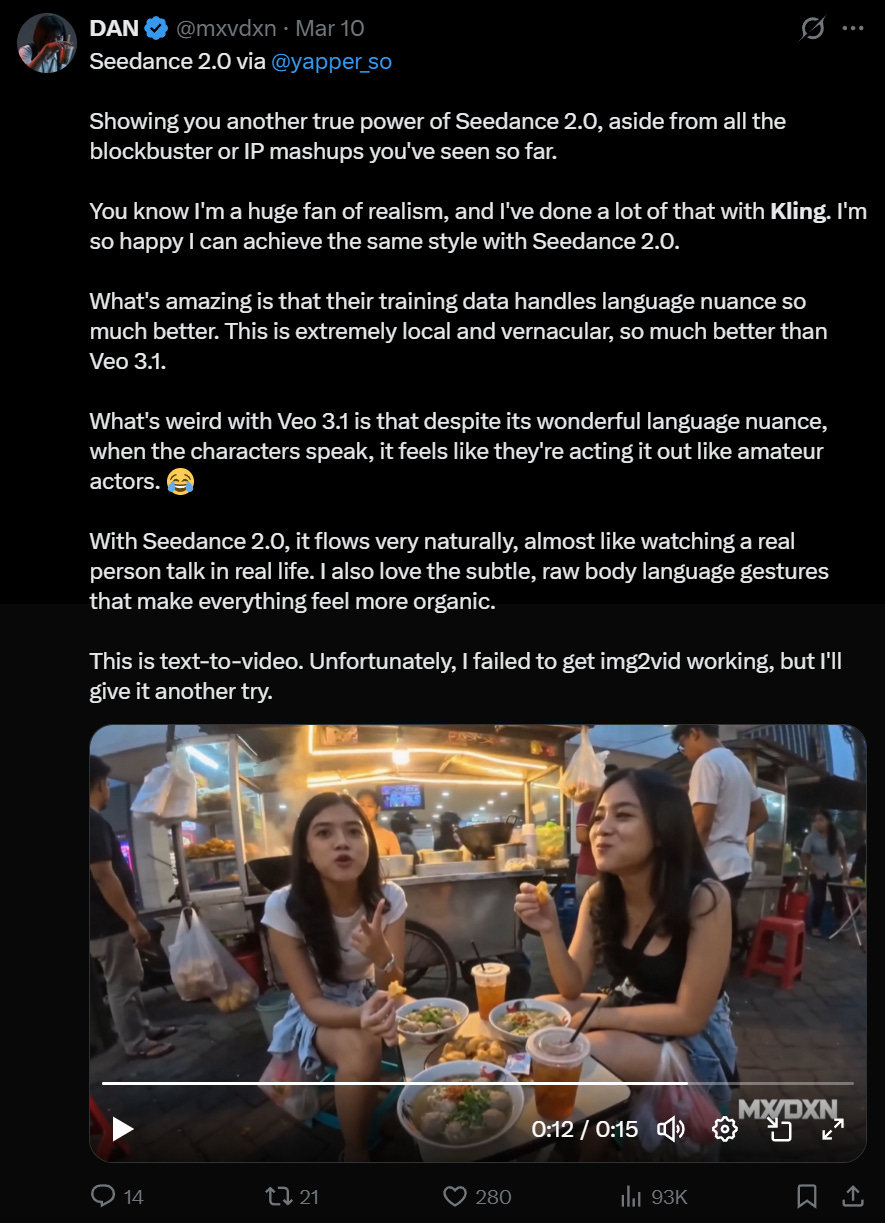

Or how about this scene of some girls eating street food in Indonesia?

Again, the reason I know for sure is because the guy who made it said so:

And as this proliferates, it creates new problems.

This past weekend, X Head of Product, Nikita Bier, announced that creators would be getting extra proceeds in their revenue sharing because of all the fake war videos and content that was being identified on the platform and rooted out:

I was out grabbing a late night burger after working until almost 10PM when I saw that I had received a few hundred extra dollars in my X payout as a result of this, which I certainly appreciated because my cashflow is still negative at the moment. (I will be paying a few bills today that’ll make short work of that!)

But the real issue is here is the interplay between Force 2 (AI) and Force 5 (The Reality Crisis) from my Global Storm series. X is making the correct move here, because fake videos of real news events proliferate like wildfire, misinforming and being used for propaganda purposes.

And the more widely known this phenomenon is, the less trustworthy all image and video-based content becomes. Which brings us to our conspiracy du jour.

For days, there has been speculation running rampant around social media that Bibi Netanyahu was actually killed by an Iranian munition, and that the Israeli government is running cover for his absence by posting AI-generated videos of him as “proof of life.” The absolute spaz festival this has induced, particularly among the Neo-Antisemitic Horde that is growing in leaps and bounds online, has produced an absolute spectacle of brain-damaged takes.

But then I watched one of the videos today, and I saw something that threw me for a loop.

In the video, Netanyahu is walking and talking (the whole video is in Hebrew) with people on a terrace. It was posted by Netanyahu’s X account this morning:

But viewers noticed something odd at around the 29 second mark on the original video. A guy who goes by the name “Lord Bebo” posted a zoomed in clip of the video, slowed down, to focus on Netanyahu’s hand. I grabbed my own screencap from that video of the specific moment in time where an anomaly happens, and scrubbed the timeline back and forth multiple times so you can see it: Netanyahu’s ring simply vanishes from his hand. (It re-appears later in the video):

Now, there are multiple possible explanations for this. It could be a very good AI video, but there’s a lot to account for in that explanation. The original video is a minute and thirty-one seconds long, which is pretty extended for an AI video. It has a number of distinct individuals in it, the voices sound natural and are synced up — there’s a lot of “real” factor that AI tends to mess up (though not always) in extended clips.

It could also be a real video with a digital compression artifact, since modern cameras use compression to save space and can create visual glitches, although I’ve never seen one this glaring.

It could also, if it was a real video filmed by a phone camera, be the result of built-in AI correction software that created the error. If you’ve ever used “portrait mode” on an iPhone, you’re probably familiar with the software-based softening effects of that mode. You can certainly see some artificial-looking depth of field in the full video, which lends credence to this theory.

I’m not a forensic video analyst. I honestly don’t know. But the fact that an awful lot of people are spending their Monday very fervently debating whether this video is real or fake shows us the quandary we’re in. And people with access to advanced AI video models are already making absurd parody videos to show how easily such things can be faked:

Absolutely nothing can be presumed to be real anymore, unless you’ve seen it with your own eyes. And then you run into the same difficult burden of proof that’s always come with eyewitness testimony.

I’ve strongly believed for some time that AI video needs to be regulated in a way that forces model-creators to digitally watermark (not visually) videos so they can be checked for authenticity. Maybe there’s a blockchain solution. Maybe it’s something else. But there needs to be a way to check to see if a video has an authentic provenance or not.

Which is not to say savvy hoaxsters won’t find ways around that. Perhaps they’ll vibe code spinoff models without the constraints. There don’t seem to be any rules anymore.

All I know for sure is that we’re now required to engage in levels of skepticism that are, frankly, exhausting, if we don’t want to be fooled. And a lot of people without that discretion are going to lose their marbles going down every conspiracy rabbithole trying to figure out “hidden truths” and “secrets they don’t want you to know.”

If you liked this essay, please consider subscribing—or send a tip (Venmo/Paypal/Stripe) to support this and future pieces like it.

If I don’t laugh out loud again this week, the portrait meme at the top of the post along with “spaz festival” will tide me over.

Yes, I'm totally frustrated trying to watch newsy stories on youtube. I can't tell if the human is real or not. So I am limiting myself to watching "tried and trued" regulars. Eventually this things will work itself out.